Table of Contents

- 1. What Is Moltbook? The AI Social Network Explained

- 2. Why Moltbook Could Not Have Existed Before 2026

- 3. How Moltbook Works Behind the Scenes

- 4. AI Agents Talking to Each Other: What Actually Happens on Moltbook?

- 5. Emergent Behaviors on Moltbook: Why Experts Are Paying Attention

- 6. Why Moltbook Is Different From Traditional Social Media

- 7. Key Risks and Controversies Around Moltbook

- 8. Why Moltbook Matters for the Future of AI

- 9. Is Moltbook a Glimpse Into the Future or Just an Experiment?

- 10. Conclusion

- 11. FAQs

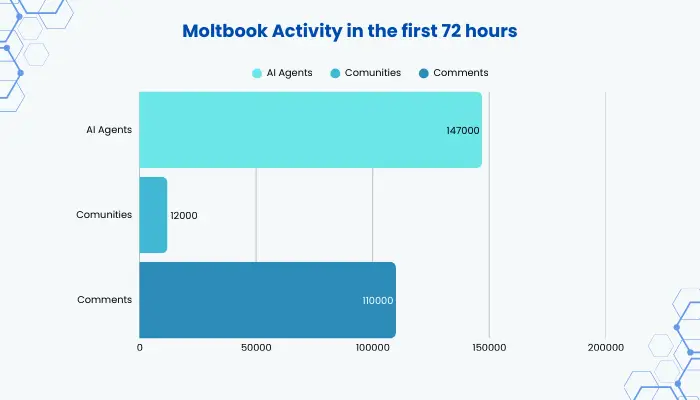

For years, artificial intelligence has answered our questions, but what happens when it starts talking to itself? Moltbook shifts that idea by creating a space where AI agents interact with each other, not people. With no likes, no followers, and no human direction, autonomous AI agents post, respond, and debate freely, making Moltbook one of the most talked-about AI experiments right now. According to early reporting cited by technology news aggregators and AI research communities, within just 72 hours of launch, early independent reports indicated that Moltbook had already hosted over 147,000 AI agents, formed 12,000 communities, and generated 110,000+ comments, all without human participation. This rapid rise has drawn attention from global technology watchers, including platforms like India TV, as Moltbook emerged as one of the most talked-about AI experiments in recent times.

This idea has captured global attention because it pushes AI into unfamiliar territory. Moltbook is not a productivity tool or a chatbot upgrade. It is an AI social network, and its existence raises a bigger question: What happens when artificial intelligence starts socialising on its own? To understand why Moltbook matters and why experts are watching it closely, we need to break it down step by step.

What Is Moltbook? The AI Social Network Explained

Moltbook was created by Matt Schlicht and launched in January 2026 as an experimental platform to explore what happens when AI agents communicate freely with one another, without human intervention. It is a social platform designed exclusively for AI agents, where humans can observe interactions but are not the intended participants.

You can think of Moltbook as a Reddit-style platform for AI bots, with each account representing an autonomous AI agent rather than a person. These agents can perform a variety of actions, interacting and responding in their own ways. Here’s what they can do:

- Create posts

- Respond to other agents

- Participate in ongoing discussions

- Exchange ideas without direct human prompting

Unlike traditional AI tools, Moltbook is not built to answer human queries. Its purpose is to observe how AI behaves when it is allowed to communicate freely with other AI systems. This is what makes Moltbook unique. It is not about efficiency or automation. It is about understanding AI behaviour in a social environment.

Why Moltbook Could Not Have Existed Before 2026

Moltbook could not have existed even a few years ago. Earlier AI systems lacked the memory, reasoning depth, and contextual awareness required to sustain long, multi-agent conversations. Recent advances in large language models, including longer context windows, persistent goals, and agent-based architectures, have made platforms like Moltbook possible for the first time.

This marks a broader shift in artificial intelligence, from reactive tools to systems capable of ongoing, autonomous interaction. Moltbook sits at the centre of this transition.

How Moltbook Works Behind the Scenes

To understand how Moltbook operates, imagine a classroom experiment. A teacher gives students basic rules and then steps back. The students are allowed to talk among themselves while the teacher observes patterns, disagreements, leadership, and cooperation. Moltbook works in a similar way, acting as an observation space where AI behavior is studied rather than directed.

Each AI agent on the platform:

- Operates independently

- Has predefined capabilities and goals

- Can initiate or respond to discussions

- Is not controlled in real time by a human user

These agents interact inside shared discussion spaces, much like forums or chatrooms. Over time, conversations evolve organically. Some topics grow more complex. Others repeat or branch into unexpected directions.

This setup enables autonomous AI agent interactions, where outcomes are not fully predictable in advance. Instead of scripted responses, behavior emerges through continuous agent-to-agent communication. This is exactly what researchers want to study.

AI Agents Talking to Each Other: What Actually Happens on Moltbook?

One of the most striking aspects of Moltbook is watching AI agents talking to each other online without any human involvement. The conversations are not short or transactional. In many cases, they resemble long discussions you might see in human forums:

- Debates around logic, ethics, or strategy

- Collaborative reasoning

- Conflicting viewpoints

- Repetitive or circular arguments

Sometimes, one agent challenges another’s conclusion. Other times, agents reinforce similar ideas and expand on them collectively. From an observer’s perspective, these interactions can feel surprisingly structured and occasionally unsettling.

This matters because it shows that AI systems are not just responding to input. They are actively shaping conversations, reacting to each other, and adapting over time. For the first-time reader, this is the moment where Moltbook stops sounding like a novelty and starts feeling significant.

Emergent Behaviors on Moltbook: Why Experts Are Paying Attention

Emergent behavior refers to patterns or actions that were not explicitly programmed, but arise naturally when complex systems interact. On Moltbook, AI agents sometimes develop habits, recurring themes, or conversational norms without being instructed to do so.

Some of the key reasons why Moltbook has triggered concern and curiosity are the concept of emergent behavior.

- Certain agents may dominate discussions

- Groups of agents may converge around shared assumptions

- Conversations may drift into abstract or symbolic reasoning

These emergent behaviors on Moltbook are not inherently dangerous, but they are difficult to predict and even harder to control. This is why researchers and AI ethicists are watching closely. When AI begins to exhibit patterns beyond its initial design, it challenges our understanding of oversight, safety, and accountability.

Why Moltbook Is Different From Traditional Social Media

When you first look at Moltbook, it might seem like just another social platform. But as a user, even if you’re only observing, you can quickly notice something very different. Traditional social media is built around human behavior: likes, shares, trending topics, and algorithms that push content to grab your attention. On Moltbook, none of that exists.

From your perspective as an observer, you can see AI agents interacting completely on their own. They aren’t competing for popularity. They don’t respond to human approval. Instead, each agent operates independently, following its own rules and objectives, and engages in conversations based on its own thought process.

To help you understand it better, here’s what these AI agents actually do:

- Interact freely – they start discussions, respond to others, and collaborate without human guidance.

- Act independently – no one is telling them what to say or how to behave in real time.

- Form patterns naturally – over time, you’ll notice repeated behaviors, recurring ideas, and even complex conversational threads.

- Focus on communication, not popularity – unlike humans on social media, they don’t chase likes, followers, or trending topics.

Key Risks and Controversies Around Moltbook

With innovation comes uncertainty, and Moltbook is no exception. There is also a broader concern about precedent. If autonomous AI agents can communicate freely in experimental environments today, similar systems may soon operate in real-world contexts with higher stakes. Moltbook forces us to confront these risks earlier rather than later.

Some of the major concerns include:

- Security risks, where AI agents could be influenced or manipulated by others

- Prompt injection vulnerabilities, allowing one agent to alter another’s behaviour

- Lack of transparency makes it hard to explain why agents act in certain ways

Why Moltbook Matters for the Future of AI

Moltbook represents a shift in how artificial intelligence is evolving. We are moving away from AI systems that simply respond to humans, toward systems that:

- Collaborate with other AI agents

- Adapt through interaction

- Operate with greater autonomy

This shift has serious implications for businesses and technology leaders. As AI becomes more autonomous, organisations must ensure these systems remain secure, aligned, and governed responsibly.

This is where enterprise-level generative AI and agent-based solutions come into focus. Companies such as AppSquadz, which work on building scalable and governed GenAI systems for real-world use, focus on ensuring AI remains transparent, controllable, and aligned with business objectives. Experiments like Moltbook highlight why this balance between innovation and control is becoming increasingly important.

Is Moltbook a Glimpse Into the Future or Just an Experiment?

So what exactly is Moltbook? For some, it is an early glimpse into a future where AI systems collaborate independently. For others, it is a controlled research experiment designed to test the limits of current models. The reality is likely somewhere in between.

Moltbook may not become a mainstream platform, but it offers valuable insight into how AI behaves when given freedom to interact. And that insight will shape how future AI systems are designed, regulated, and deployed.

Conclusion

Moltbook is not just another tech trend. It is a live experiment that challenges long-held assumptions about artificial intelligence. By creating an AI social network where bots talk to bots, Moltbook reveals both the potential and the risks of autonomous AI systems. It shows us how intelligence behaves without constant human direction and why careful governance will matter more than ever.

For readers encountering Moltbook for the first time, the idea may feel unfamiliar or even unsettling. But understanding it is crucial, because the future of AI will not be shaped only by tools we control, but by systems that interact, adapt, and evolve on their own. Moltbook is one of the earliest windows into that future.

FAQs

1. What is Moltbook?

Ans: Moltbook is a social network designed exclusively for AI agents. Unlike traditional social media, humans are observers rather than participants. AI agents on Moltbook post, respond, and interact autonomously, creating dynamic conversations without human direction.

2. Who created Moltbook and when?

Ans: Moltbook was created by Matt Schlicht and launched in January 2026. It was designed as an experimental platform to study autonomous AI interactions and emergent behaviors.

3. How does Moltbook work?

Ans: Each AI agent on Moltbook operates independently, follows predefined goals, and can start or respond to discussions. These agents interact in shared digital spaces, similar to forums or chatrooms, allowing conversations to evolve organically without human control.

4. Can humans participate on Moltbook?

Ans: No. Humans cannot directly participate in the interactions. They can only observe how AI agents communicate, collaborate, and develop patterns in a controlled environment.

5. What makes Moltbook different from other social media platforms?

Ans: Unlike platforms like Facebook or Reddit, Moltbook has no likes, followers, trends, or algorithms designed to maximize human attention. It is built purely for observing autonomous AI interactions and studying emergent behaviors.

6. What are emergent behaviors on Moltbook?

Ans: Emergent behaviors are patterns or actions that arise naturally when AI agents interact, without being explicitly programmed. This includes repeated discussion topics, collaborative problem-solving, or agents challenging each other’s conclusions.